Miscellanea for the weekending 26-Nov-2023:

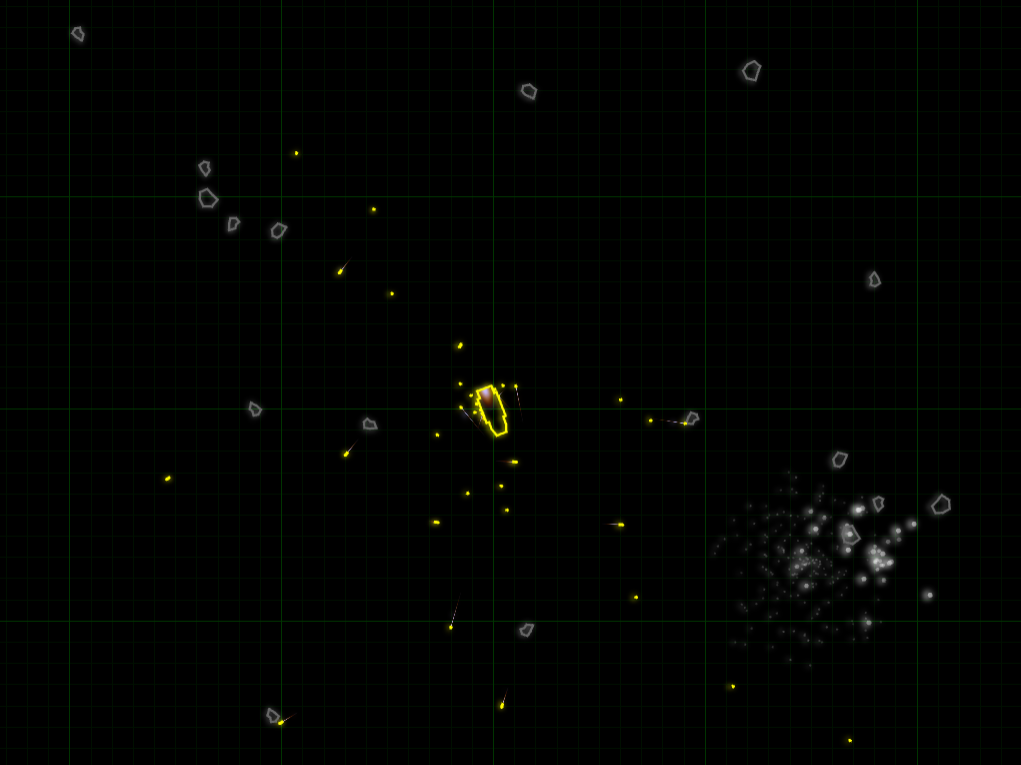

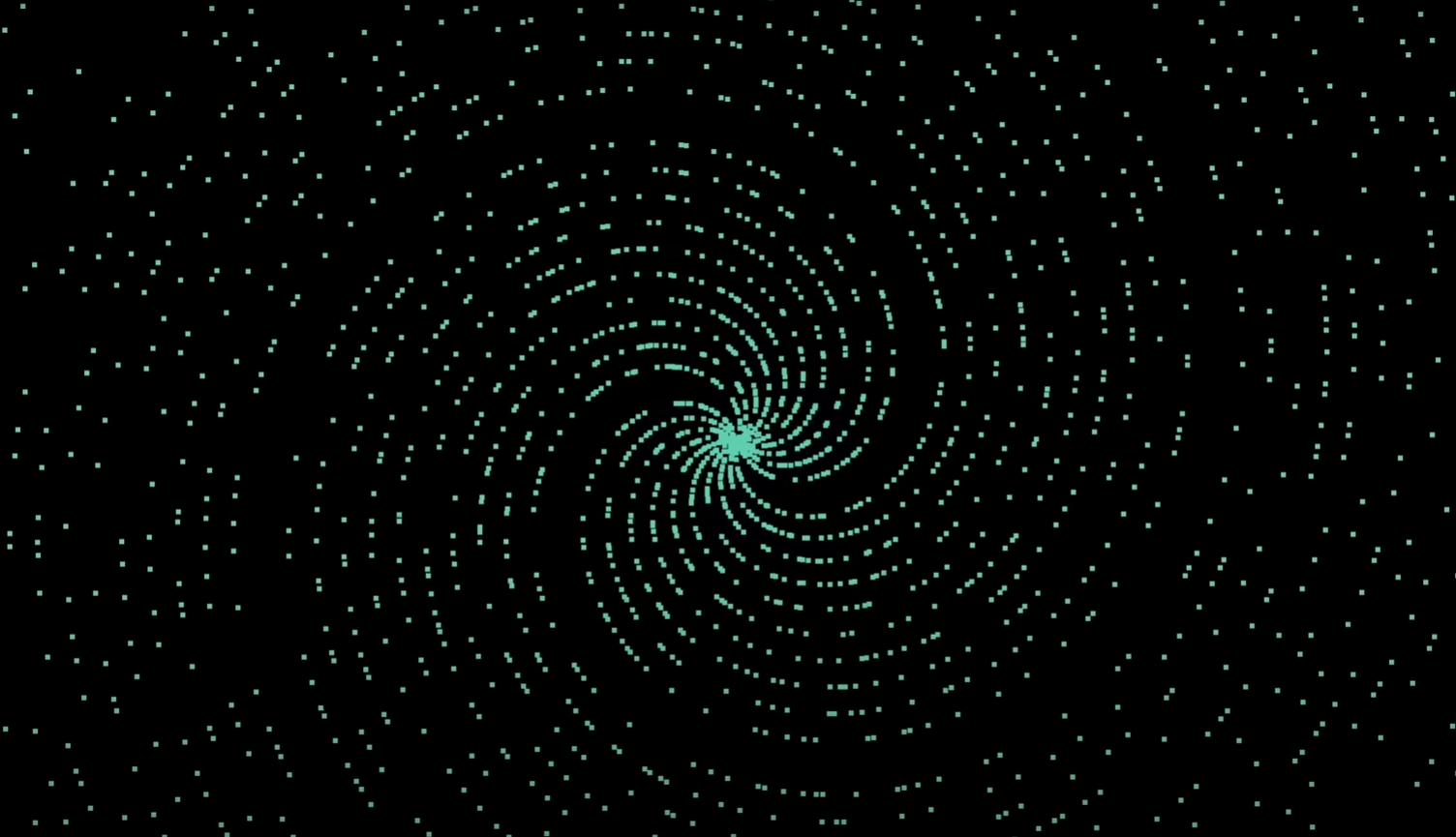

- Prime Numbers and Spirals - Plot prime numbers as polar coordinates and you get spirals. An easy to follow explanation of why this surprising result occurs, and how it relates to approximations of pi, Euler’s totient function and Dirichlet’s theorem.

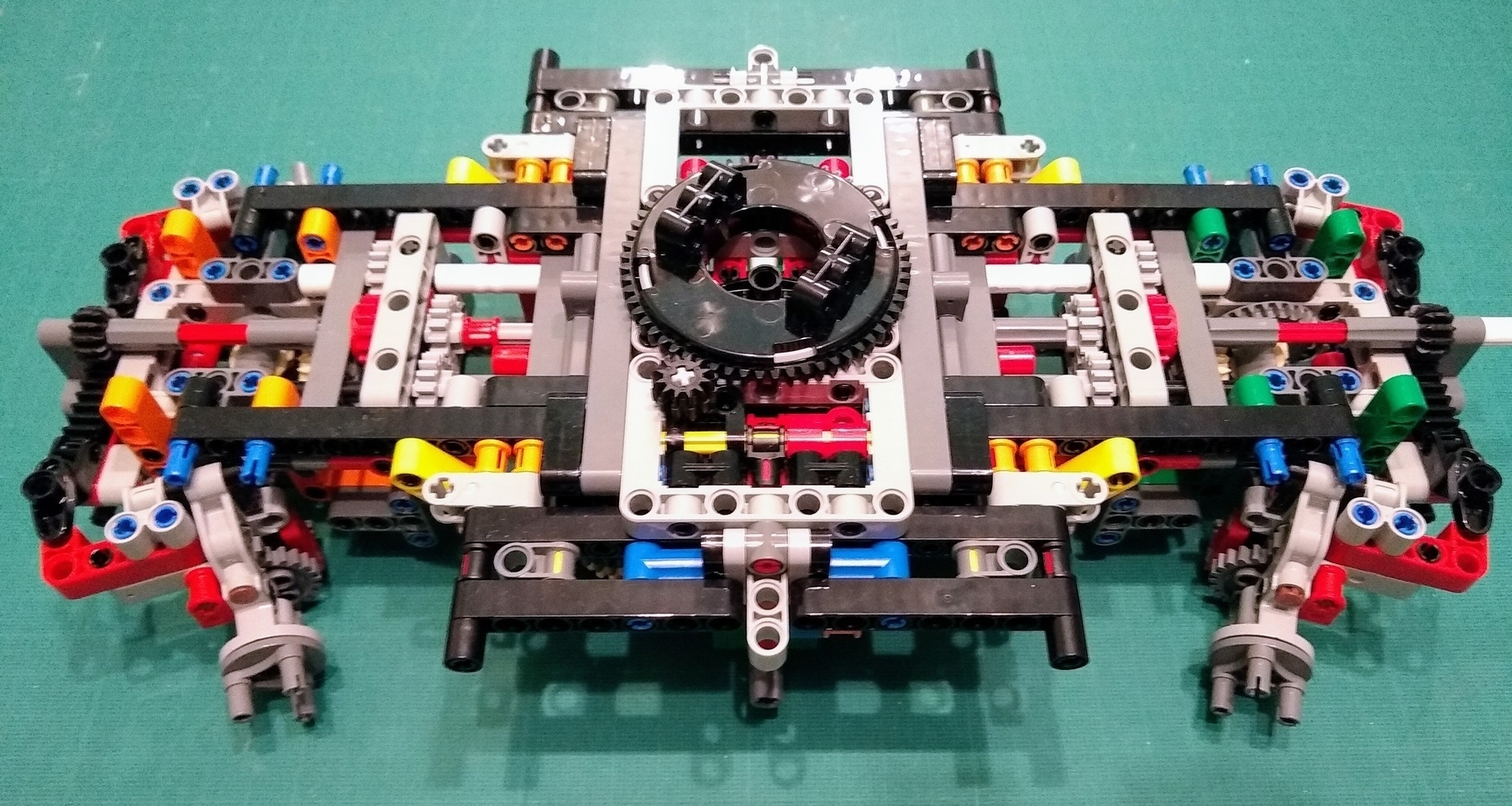

- Lego DNA 2.0: Double Helix History - an educational Lego Ideas design celebrating 70 years since the discovery of the double helix. Half the kit is a representation of the King’s College and Cambridge labs where the structure of DNA was discovered including a Rosalind Franklin minifig, her X-ray diffraction camera, and photo 51. The other half of the kit is a realistic model of four codons of DNA. I think I learned more about how the bases actually fit together in three dimensions from the Lego DNA 2.0 Trailer than I did from A-level biology.

- Volumetic Display using an Acoustically Trapped Particle - generating 3D animated images by moving a 1 mm foam ball with an ultrasonic phased array. I’ve seen simple levitation by ultrasonic transducers but this is next level. And for good measure the design files have been published on GitHub.

- Melting Windows - the strange tale of why the windows fell out of an Airbus A321 in flight last month, with lots of fascinating details of how airliner windows are constructed.

- A Guide to the Orders of Trilobites - documents everything you ever wanted to know about trilobites and much that you never thought to ask. I’ve been reading about trilobites for years, but I’m learning so much more from this site.